Proper database tuning is vital in ensuring that the SQL within your application is performing at top efficiency. Many database administrators and software developers take appropriate steps to ensure that all frequently used SQL and queries have the necessary indexes or logical files on the system.

What some miss though is the fact that there are many more opportunities to improve the performance of SQL than just simply the analysis and building of new indexes.

A closer look at the underlying causes of long running SQL requests will highlight these other opportunities and give you many more avenues for improving system performance.

Focus on SQL 'Elapsed Time' as the priority when performing any analysis of SQL and queries on your system. The requests that run the longest are likely the same ones that consume the most CPU, perform the most I/O and cause the most memory faulting on your system. They are surely the ones that the end-users care about the most. They don't see CPU, I/O and memory statistics. They see 'Response Time'. How long does my ENTER key or my 'Submit' button take to move to the next screen? How long does it take for my report to start printing?

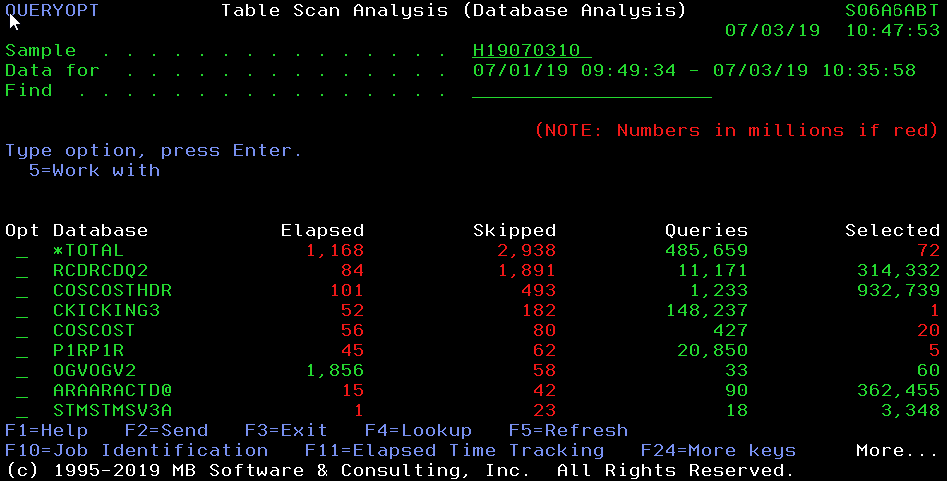

When analyzing SQL performance data, you might see requests with 'Index Advised' of YES. Existing customers using v6.0 or older versions of our Workload Performance Series software may see huge 'Skipped Records' in our Query Optimizer tool. While it is important to pay attention to IBM's advise for building new indexes, you must look closely at the resources actually consumed by these requests.

You could have a query that runs thousands of times per day over 200 records each time. If the total 'Elapsed Time' of all 200 requests is less than 3 seconds, you might want to reconsider wasting your time and system resources building this new index. Taking a system-wide look at SQL running on Production systems will keep you focused on what runs the most and runs the longest.

What else can be done besides building a new indexor changing the SQL to make it use an existing index?

- Analyze SQL timeouts on your system to determine whether operating system PTFs or QAQQINI setting changes are necessary. The process of SQL trying, timing out and retrying is significant - directly affecting the end-user response times.

- Research and understand 'Access Plans'. If your application software is running an SQL request hundreds of thousands of times per day with an access plan being rebuilt each time - request elapsed time will be suffering. An access plan should be built once, stored and re-used by these thousands of requests.

- Get educated about the difference between dynamic SQL and static SQL. The performance implications of dynamic SQL is significant for high volume applications. The code that performed fine in a test environment with 10,000 records may be costing your business hundreds of thousands of hardware dollars now that it is running in Production with millions of transactions.

- Ensure that all 'Open Data Paths' are reusable and are actually getting reused. An application opening and closing a database file for each insert of a record is completely unacceptable from a performance standpoint. You may need to recompile your stored procedures or COBOL and RPG programs that use imbedded SQL. Understand the difference between closing an open data path at the end of a call to a module versus at the end of the job. This is a compiler option.

- Give the operating system a chance to run code more efficiently. Running programs on a Production system with 'Debugging Views' of *ALL and 'Optimization Level' of *NONE is basically saying that you don't care about performance. This is telling the operating system that you want to debug your code on a Production system. Security auditors ought to care about this one. How about DBGVIEW(*NONE) and OPTIMIZE(*ALL) so that the compiler can try to get your code to perform optimally.

- Never allow your application to dynamically rebuild 'Access Paths' or dynamically sort large volumes of data. Sure if it's a one-time process, running in the middle of the night - it would be OK. If it is a recurring process, running thousands of times per day - permanent logical files or indexes with access path maintenance of *IMMED is near always the better option.

- Watch out for the impact of SQL 'Data Conversion'. Passing matching data types into an SQL request is much better than letting the operating system possibly perform a conversion for each record processed.

The Workload Performance Series software and its integrated Query Optimizer tool provides you with the data collection, data analysis and data mining tools necessary for researching the SQL performance opportunities highlighted earlier. Much of this can be done using the IBM  command STRDBMON and your favorite query tool. Our Query Optimizer tool automates this process and lets you focus on the data analysis and changes necessary to improve performance on your systems.

command STRDBMON and your favorite query tool. Our Query Optimizer tool automates this process and lets you focus on the data analysis and changes necessary to improve performance on your systems.

Caution should be used when running the 'Database Monitor (STRDBMON)' directly on your Production systems. Significant DASD, CPU, I/O and memory resources may be consumed by a manual data collection and analysis approach.

Great lengths have been taken within our tools to limit and control the performance impact of the data collection and analysis process.

The 'SQL Details' screen is shown here as an example of the detailed data that is captured by our Query Optimizer tool. The more important information is that provided by the system-wide data summarization screens. These screens show the SQL statistics at various levels for helping to pinpoint the recurring SQL requests that need further analysis.

After identifying the SQL requests running the most and running the longest, you can then look at whether the underlying causes are from lack of proper indexing, SQL timeout issues, access plan rebuilds, dynamic SQL issues, lack of reusing open data paths, improper compiling of programs, inefficient access path rebuilds or dynamic sorting of data, or maybe some simple pre-formatting of parameter data being passed into the requests. Regardless of the performance issues within your environment, if you are not focused on the things that matter most - you are wasting a lot of time, money and resources. Analyzing the queries that you wrote yesterday and are most familiar with might not be the best use of your time.

We invite you to go to our web site at http://www.mb-software.com, take us up on our Free 30 Day Trial offer or just make use of our extensive online Resource Center. White Papers and Webcasts are available which provide educational value to managers, administrators and software developers.