AS/400 and iSeries data replication software uses the OS/400 journaling facilities to detect changes to objects and transactions on your source system(s). Whether you are replicating data between servers for data warehousing purposes or for high availability, there can be an enormous resource requirement  by simply turning journaling on. Decisions to journal entire data libraries, the integrated file system or the entire system will drive the performance impact of journaling on your AS/400 and iSeries servers.

by simply turning journaling on. Decisions to journal entire data libraries, the integrated file system or the entire system will drive the performance impact of journaling on your AS/400 and iSeries servers.

Many applications use temporary objects are part of their processing of data. Some software programs place temporary physical files into permanent database libraries. Other applications might use the OS/400 integrated file system for temporary storage. Regardless of the file system, any temporary objects that are inadvertently journaled, can cause a huge inflation in the impact of journaling on your system(s).

Security auditing choices can be a significant contributor to resource requirements as well. If your application is excessively changing the authority on the same objects thousands of times per day, the system QAUDJRN and data replication software will consume unnecessary resources.

Selective use of the 'Change Object Auditing (CHGOBJAUD)' and 'End Journaling PF Changes (ENDJRNPF)' commands help many AS/400 and iSeries shops dramatically reduce the size of their journal receivers on a daily basis. With less data to process, the data replication software will consume less CPU, memory and disk activity - ultimately being able to keep data current, with less resource requirements.

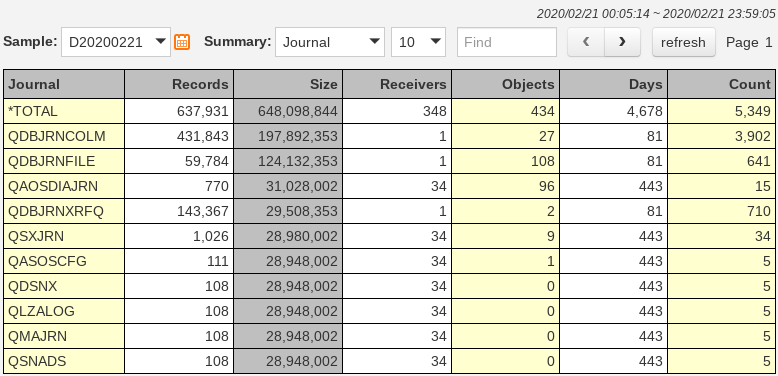

The following chart shows some sample summary level information captured by the Journal Optimizer tool:

This data can be of great use in helping to pinpoint areas where resource requirements can be reduced. It might be that some journal receivers on your system are just not being automatically maintained. Other journals and journal receivers might be 'aged' automatically by the data replication software. The issue with these journals is not data retention, but the data volume to begin with. If your application is generating 50 gigabytes of transactions per day, a simple journal analysis might show you that 80% of the transactions are from physical files used only by report jobs for sorting the data on the reports. You may be using an imaging application that utilizes a folder on the integrated file system for the manipulation of the image files. You better not be journaling this folder!

The summary level information within the Journal Optimizer tool is a great place to start. A detailed journal analysis will be required to get to the necessary level of detail. Looking at number of transactions by file, program, job, user and transaction type will give you the data necessary to draw some conclusions:

In this example, it appears that an application is writing 2,000 records, updating them and then deleting them - why journal them?

Other database files might have 10,000,000 'PT' transactions and 24 'CR' transactions. This is a temporary work file that is getting cleared with a CLRPFM command and should not be journaled. There would be a very rare circumstance where you would legitimately consume all of this CPU, memory, DASD and disk I/O to end up with an empty file on the target system.